Meta is introducing a new feature on Instagram that will alert parents if their children repeatedly search for terms linked to suicide or self-harm. The company says it’s part of other efforts to provide critical information quickly when a teen may be in trouble.Starting next week, parents enrolled in Instagram’s “Supervision” feature will receive notifications via text, email, and in-app alerts if their teen searches for harmful topics.These searches are typically blocked, and teens are directed to resources and helplines. Tapping the alert will open a full-screen message with guidance on how to support the teen.Instagram clarified that this rollout is not related to the ongoing lawsuit from the state of New Mexico, which accuses Meta’s platforms of being a “breeding ground” for child sexual exploitation. The company stated, “Launching a feature like this takes time — from consulting with experts both internally and externally, to the technical development — and we’ve been working on it for months.”The “Supervision” feature is designed for users aged 13 to 17, allowing parents to monitor screen time, set time limits, and limit followers. “We understand how sensitive these issues are and how distressing it could be for a parent to receive an alert like this. The vast majority of teens do not try to search for suicide and self-harm content on Instagram, and when they do, our policy is to block these searches, instead directing them to resources and helplines that can offer support. These alerts are designed to make sure parents are aware if their teen is repeatedly trying to search for this content, and to give them the resources they need to support their teen,” a Meta spokesperson wrote to KOAT. Meta did not disclose the specific number of searches required to trigger a notification, as they do not want teens to circumvent the system. Additionally, teens will not be notified when their parents receive an alert.Meta has not provided public numbers on how many parents are currently enrolled in Instagram’s parental supervision features. Aside from the lawsuit in New Mexico, Meta is also facing trial in California over claims that its platforms deliberately make children addicted to them.

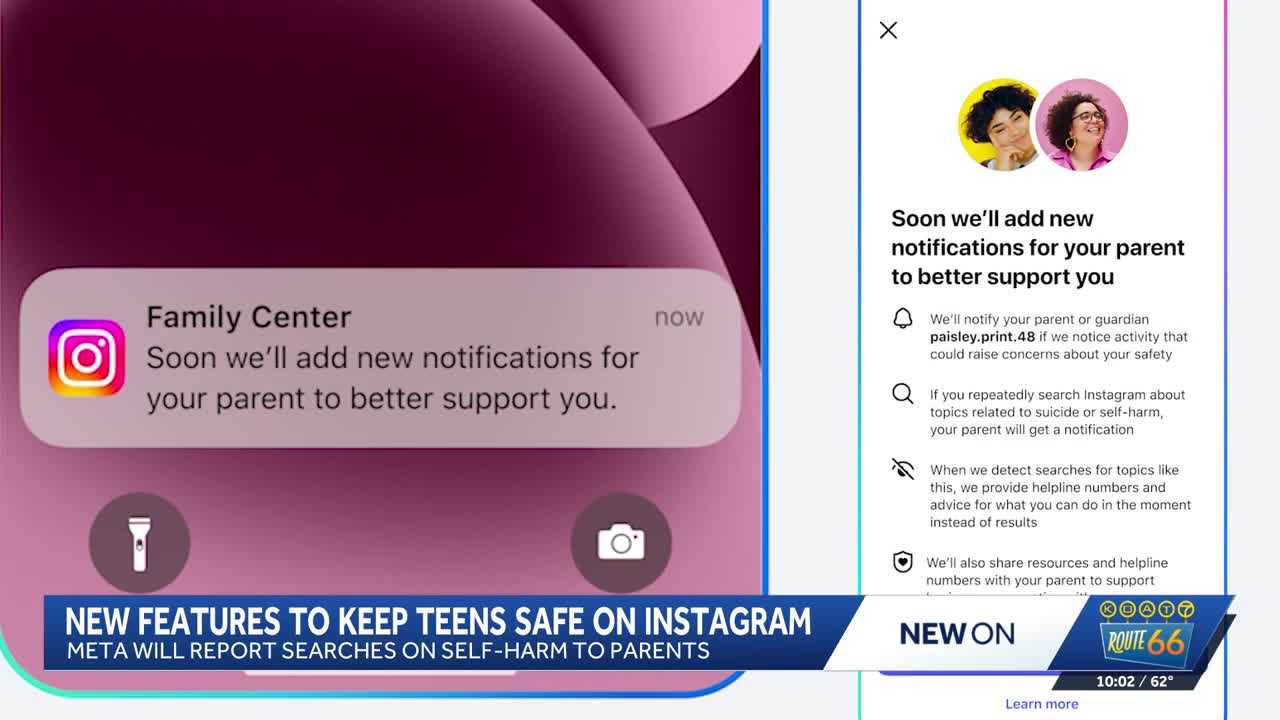

Meta is introducing a new feature on Instagram that will alert parents if their children repeatedly search for terms linked to suicide or self-harm. The company says it’s part of other efforts to provide critical information quickly when a teen may be in trouble.

Starting next week, parents enrolled in Instagram’s “Supervision” feature will receive notifications via text, email, and in-app alerts if their teen searches for harmful topics.

These searches are typically blocked, and teens are directed to resources and helplines. Tapping the alert will open a full-screen message with guidance on how to support the teen.

Instagram clarified that this rollout is not related to the ongoing lawsuit from the state of New Mexico, which accuses Meta’s platforms of being a “breeding ground” for child sexual exploitation. The company stated, “Launching a feature like this takes time — from consulting with experts both internally and externally, to the technical development — and we’ve been working on it for months.”

The “Supervision” feature is designed for users aged 13 to 17, allowing parents to monitor screen time, set time limits, and limit followers.

“We understand how sensitive these issues are and how distressing it could be for a parent to receive an alert like this. The vast majority of teens do not try to search for suicide and self-harm content on Instagram, and when they do, our policy is to block these searches, instead directing them to resources and helplines that can offer support. These alerts are designed to make sure parents are aware if their teen is repeatedly trying to search for this content, and to give them the resources they need to support their teen,” a Meta spokesperson wrote to KOAT.

Meta did not disclose the specific number of searches required to trigger a notification, as they do not want teens to circumvent the system. Additionally, teens will not be notified when their parents receive an alert.

Meta has not provided public numbers on how many parents are currently enrolled in Instagram’s parental supervision features. Aside from the lawsuit in New Mexico, Meta is also facing trial in California over claims that its platforms deliberately make children addicted to them.